|

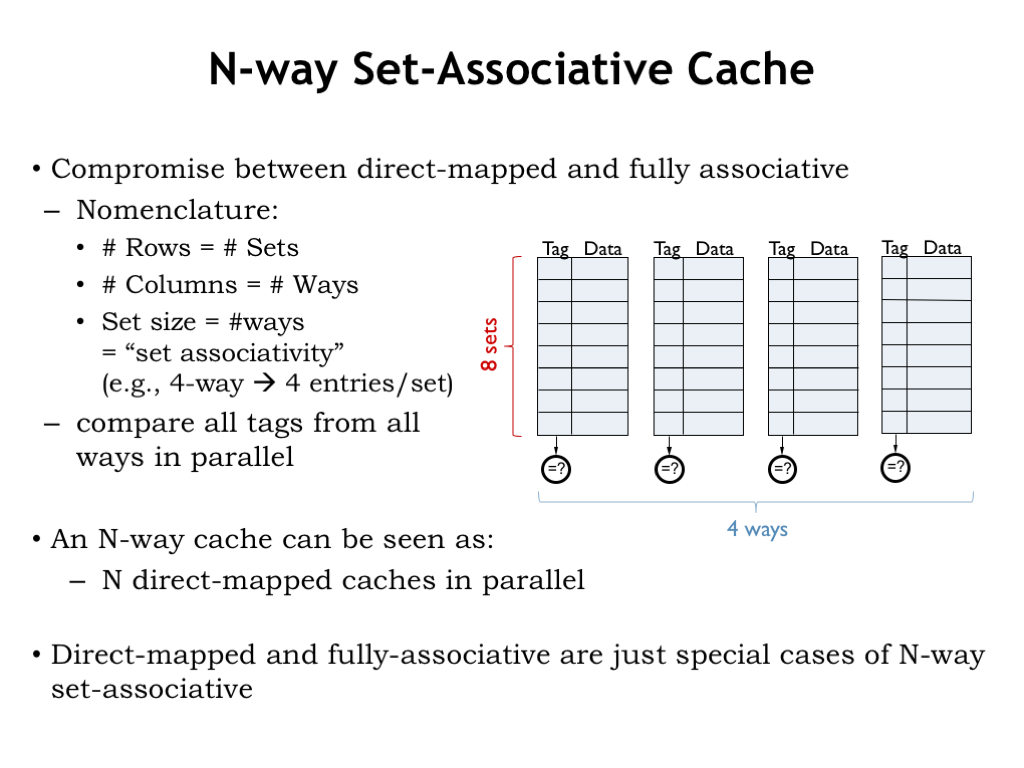

7/29/2023 0 Comments Direct mapped vs set associative We don’t know in advance - this would depend on the actual usage of the data (i.e., it depends on the software we run). Instead, it will hold some part of the data stored in the main memory. However, the cache is small, so it cannot hold the entire data of the main memory. So we better save data in our small and fast cache rather than in our big and slow main memory. It might take a long time to access data in the main memory, but it is very fast to access the cache. CacheĪ cache is just a faster, yet smaller, memory. If you prefer to learn by examples, jump to after the fold. Sorry if it doesn't seem like I have put a lot of work into this, but I am familiar with only directly mapped cache and the sample problems I have seen aren't very explanatory.īefore getting to your question, let's recall what set-associativity means, and how one can figure out how to split the address into tag, index and offset. Helping me understand the fundamentals of this problem would be so helpful and I appreciate all the help I can get. But in class, this is how I remember it being explained. Somehow I feel like this is all wrong, because of some sample problems I have seen. Since I have 3 sets, is it: $3 \bmod 3 = 0$, so $0$ is the index? $180 \bmod 3 = 0$ index as well? And would it looks like the following: My main problem is trying to figure out how to find the index and offset of associative (3-way set) cache.

I have a 3 way set associative cache with 2 word blocks, total size of 24 words.

Generally, this increases the hit rate but there’s a limit to how much it can improve the overall figure.My main issue of a homework problem is trying to figure out the different parts of the chart. This means a higher number of linked cache lines for every memory block. On the other hand, increasing the cache size means that you’d have more lines in each set (assuming the set size is also increased). Basically, increasing the number of available slots on set cache set without increasing the overall cache size means that set would be linked to a larger memory block, effectively reducing efficiency due to an increased number of flushes. However, if you don’t increase the amount of cache, the memory size of each linked memory block increases. Increasing the number of ways a set-associative memory cache has, for example, from 4-way to 8-way, you have more cache slots available per set. When the slots on a mapped set are all used up, the controller evicts the contents of one of the slots and loads a different set of data from the same mapped memory block. With a 16-way config, that figure grows to 16. On a 4-way set-associative cache each set on the memory cache can hold up to four lines from the same memory block. Here, every block of memory is linked to a set of lines (depending on the kind of SA mapping), and each line can hold the data from any address in the mapped memory block. However, as already explained, it’s the hardest and most expensive to implement.Īs a result, set-associative mapping which is a hybrid between fully associative and direct mapping is used. This cache mapping technique is the most efficient, with the highest hit rate. The cache controller can store any address. There is no hard linking between the lines of the memory cache and the RAM memory locations. That is the reason why direct mapping cache is the least efficient cache mapping technique and has largely been abandoned.įully associative mapping is somewhat the opposite of Direct Mapping. So the cache controller will load the line from address 2,000 to 2,063 in the first cache line, evicting the older data.

The cache line mapped to it, on the other hand, was a line starting from address 1,000 to 1,063. For example, if the CPU first asks for address 1,000 and then asks address 2,000, a cache miss will occur because these two addresses are inside the same memory block (128 KB being the block size).

This becomes a problem when the CPU needs two addresses one after the next that are in the memory block mapped to the same cache line. In the future, if the CPU requires data from the same addresses or the addresses right after this one (1,000 to 1,063), they will already be in the cache. For example, if the CPU asks for a given memory address (1,000 in this case), the controller will load a 64-byte line from the memory and store it on the cache (1,000 to 1,063). Fully associated mapping Which Mapping is the Best?ĭirect mapping is the easiest configuration to implement, but at the same time is the least efficient.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed